If you work in tech or run an online business, you have probably heard the name Anthropic pop up next to OpenAI and ChatGPT. Maybe a colleague mentioned Claude, or you saw someone on X saying it feels “calmer” or “more careful” than other AI tools. You might be wondering: is this just more AI hype, or is there something genuinely different here for businesses that care about reliability and trust?

In this guide, I want to explain Anthropic in plain language, the way I’d describe it to a friend who runs a SaaS or an online store. No buzzwords, no magic — just what Anthropic is, why it exists, and how tools like Claude can actually fit into day‑to‑day work, especially when it comes to uptime, incidents, and customer communication.

What Is Anthropic? A Clear, Honest Guide for Businesses

Anthropic is an AI company that spends most of its effort not on making the loudest or flashiest model, but on building systems that behave responsibly and predictably. The team includes researchers who previously worked on some of the early large language models and then decided to focus more directly on safety, reliability, and long‑term risk. Instead of asking only “how powerful can we make this?”, they keep coming back to “how do we make sure this does not cause harm in the first place?”.

The product most people know Anthropic for is Claude, its AI assistant. You can use Claude to read and summarize long documents, draft content, review policies, and help teams reason through complex situations step by step. To a non‑technical user, it looks similar to many other chat‑based AI tools. The difference becomes obvious when you put it in front of tasks where accuracy, tone, and caution matter more than raw creativity.

Why Anthropic exists: safety before hype

This issue is more significant than most people realize.

If you have used any AI chatbot for more than a few minutes, you have seen the core problem Anthropic is trying to solve. These systems are very good at sounding confident, even when they are guessing. They can blend partial facts with invented details and present the result in a smooth, friendly paragraph that looks believable at first glance. For a personal side project, that might be fine. For a business handling customer data, legal documents, or financial decisions, it clearly is not.

Anthropic was created around the idea that this behavior needs stronger guardrails. Their models are trained to follow a written “constitution” a set of principles that emphasize safety, honesty, and avoidance of harmful instructions. The goal is not perfection (no AI system is perfect), but to reduce the number of dangerous, misleading, or overly confident answers and to make the model more transparent when it is uncertain.

Anthropic stemmed from that idea.

Rather than trying to figure out “How intelligent can we make this?”, they focused on “How do we prevent this from causing harm?”

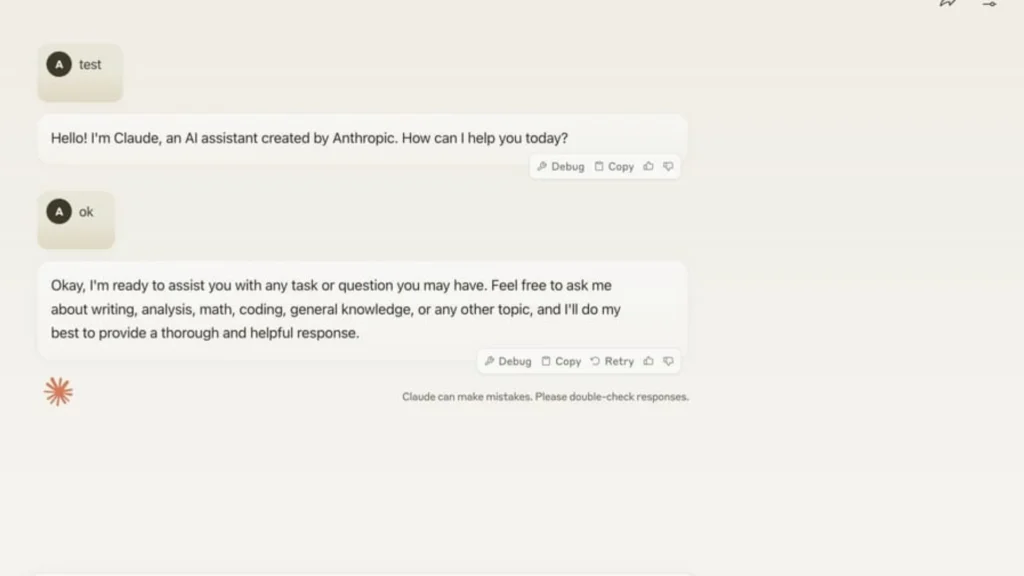

Claude, Explained Without Marketing Talk

Claude is the flagship AI of Anthropic.

At first glance, it is just a regular AI assistant. You write a question. The AI answers. Nothing classy.

The difference emerges when you put it to serious work.

Claude is great in digesting long documents. Very great. Policies, reports, internal documents, and technical explanations. The kind of text that makes a person throw in the towel halfway through page two.

It doesn’t hurry. It doesn’t exaggerate. It doesn’t make up things.

And most of the time, when it is uncertain, it just says so.

That kind of behavior is, for businesses, more important than creativity.

Departments use Claude to:

- summarize a lengthy internal document

- help customer service team draft consistent responses

- examine policies and procedures

- simplify technical events for non-technicians

- keep knowledge bases in order as they grow

Claude is not about trying to dazzle people. Its focus is on being accurate.

In fact, that’s quite a fair exchange.

What Makes Anthropic Different from Other AI Companies?

Let’s get right to the point.

Most AI companies chase capability.Anthropic chases behavior.

Where Claude stands out is in long, “boring” work that humans struggle to focus on. Think of a 40‑page policy document, a thread of internal tickets about a recurring incident, or months of status updates that need to be turned into a clean report. Most people postpone these tasks because they are time‑consuming and mentally draining. Claude, on the other hand, can calmly read large amounts of text, extract the key points, and present them in a structured way that different teams can understand.

In many companies, teams use Claude to summarize incident reports, generate draft responses for support, scan documentation for inconsistencies, and turn technical explanations into language that non‑technical stakeholders can follow. The emphasis is not on flashy “creative” output, but on clarity, consistency, and staying close to the source material. When the model is not sure, it is more likely to admit uncertainty or suggest follow‑up questions instead of inventing details.

They introduced the method called Constitutional AI. The basic idea is that instead of waiting for bad outputs to be fixed after the fact, the AI is trained beforehand to follow a set of written principles when it comes to answering.

Those principles emphasize:

- e.g. safety

- e.g. truthfulness

- e.g. harm prevention

- e.g. clarity

Thus, when Claude answers, it’s not just predicting text. It’s also self-monitoring against the rules it was taught to follow.

Is it perfect? No.Is it more appropriate for work? Mostly, yes.

If you want a deeper technical explanation, IBM explains Constitutional AI clearly here:https://www.ibm.com/topics/constitutional-ai

Is Anthropic Safe for Business Use?

Short answer. Sure.

Longer answer. Anthropic is really the very purpose for addressing this concern.

Businesses are worried about:

- incorrect facts

- dangerous answers

- unclear way of speaking

- AIs that give the impression of being overconfident in their answers

Anthropic creates its models with a focus on minimizing those issues. That’s the main reason why so many enterprises use Claude for internal applications rather than as a public chatbot.

It’s less intrusive.It’s more cautious.It gives fewer surprises.

Usually, that is a definite plus for a business.

Where AI Fits with Website Monitoring and Reliability

Now let’s address something more down-to-earth.

AI excels at language whereas monitoring tools excel at facts.

If you’re using a Webstatus247, you get informed right away when a website is down, when its performance deteriorates, or when an outage occurs. Webstatus247 monitors uptime, speed, and availability continuously.

Imagine your main application goes down at 2:14 a.m. on a Monday. Webstatus247 notices the outage within seconds and sends your on‑call engineer an alert through email, SMS, or your preferred channel. They jump in to investigate, restart services, and gradually bring everything back online. At 3:02 a.m., the system is stable again — but that is only half of the work.

Such data is genuine. It can be measured.

Now you need to explain what happened to your internal stakeholders and, in some cases, to your customers. This is where a tool like Claude becomes useful. You can paste the raw incident timeline, logs, and internal chat messages into Claude and ask it to generate: a clear internal summary, a short update for your public status page, and talking points for your support team. Your monitoring tool tells you exactly what happened and when; Claude helps you communicate that story without losing important details or over‑complicating the message.

But we need to understand clearly.

AI should never be a substitute for monitoring.

Truth be told, no website can remain flawless forever.Things inevitably fail. Servers get slower. APIs break at the most inconvenient time.

Therefore, an uptime monitoring becomes essential.https://www.webstatus247.com/uptime-monitoring

AI provides comprehension of issues. Tools of monitoring like Webstatus247 identify them.

They are both useful in their own ways.

Performance, Alerts, and Communication

If something goes wrong with performance; businesses need to be informed immediately. Not an hour later. Not waiting for customers to complain.

Webstatus247’s website performance monitoring can be a great aid in spotting slow response times and other issues well before they get your website users really frustrated.https://www.webstatus247.com/website-performance-monitoring

The value of the first notification is tremendously high if it is a real-time alert.https://www.webstatus247.com/alerts-notifications

And when clients want to find out what’s going on, a public status page is a good way to keep up a relationship of trust.https://www.webstatus247.com/status-page

Anthropic vs OpenAI: two different priorities

People adore comparisons, so let’s just breathe.

OpenAI is all about broad capability and creativity while Anthropic emphasises on safety and predictability.

Both are right in their ways.

For expressive content and testing, you might find some other tools more appealing. But if you want your AI to behave itself in professional settings, usually the choice of the safer one feels like Anthropic.

Nothing dramatic is necessary.

Real Use Cases That Actually Make Sense

Let’s skip unrealistic examples.

Anthropic’s AI works best when:

- teams deal with long documents

- support teams need consistent answers

- technical teams must explain incidents clearly

- internal knowledge keeps growing

It does not:

- fix broken servers

- monitor websites

- replace engineers

That’s why tools like Webstatus247 still matter.

Monitoring shows you the truth.

AI helps you talk about it.

Common questions businesses ask about Anthropic

What is Anthropic best known for?

Anthropic is best known for Claude and its strong focus on AI safety and responsible behavior.

Is Claude free?

Claude offers free and paid plans depending on usage and limits.

Is Anthropic only for developers?

No. Many non-technical teams use Claude daily for writing, reviewing, and summarizing content.

Can Anthropic replace monitoring tools?

No. AI explains issues. Monitoring tools like Webstatus247 detect and track them.

Why This Matters for Businesses

AI is being integrated into our daily activities. Like it or not.

It is no longer a question of whether to use AI, but rather which AI to trust.

Anthropic embodies a more deliberate and cautious approach. One that puts greater emphasis on control rather than excitement. This approach is logical for companies that prioritize accuracy, reliability, and trust.

When coupled with effective monitoring tools such as Webstatus247, AI can enable teams to increase their speed while maintaining clarity.

Moreover, achieving such a balance is important.

Final thoughts: choosing AI that matches your

Anthropic isn’t working to dazzle you.

It’s simply aiming to act responsibly.

In a world brimming with big, loud promises, that really is refreshing. And it’s precisely what many companies require.